Meta and Oracle Leverage NVIDIA Spectrum-X for AI Data Center Innovations

Meta and Oracle Upgrade AI Infrastructure

Meta and Oracle are enhancing their artificial intelligence (AI) data centers by incorporating NVIDIA’s Spectrum-X Ethernet switches. This technology is specifically designed to meet the increasing demands of expansive AI systems. By adopting Spectrum-X, both companies aim to facilitate more efficient AI training and expedite deployment processes across extensive computing clusters.

Transforming Data Centers into AI Factories

According to Jensen Huang, the CEO and founder of NVIDIA, data centers are evolving into “giga-scale AI factories” due to the rise of trillion-parameter models. He likened Spectrum-X to the “nervous system” that interlinks millions of GPUs, enabling the training of the most complex AI models created to date.

Oracle’s Strategic Integration of Spectrum-X

Oracle plans to take advantage of Spectrum-X Ethernet in combination with its Vera Rubin architecture to create large-scale AI factories. Mahesh Thiagarajan, Oracle Cloud Infrastructure’s executive vice president, indicated that this integration will enhance the efficiency of connecting millions of GPUs. This improvement will assist customers in training and deploying new AI models at a faster pace.

Meta’s Expansion with Spectrum-X

Simultaneously, Meta is broadening its AI framework by incorporating Spectrum-X Ethernet switches into its proprietary Facebook Open Switching System (FBOSS). Gaya Nagarajan, Meta’s vice president of networking engineering, emphasized that their next-generation network must be both open and efficient to accommodate increasingly complex AI models while serving billions of users.

Building Flexible AI Systems

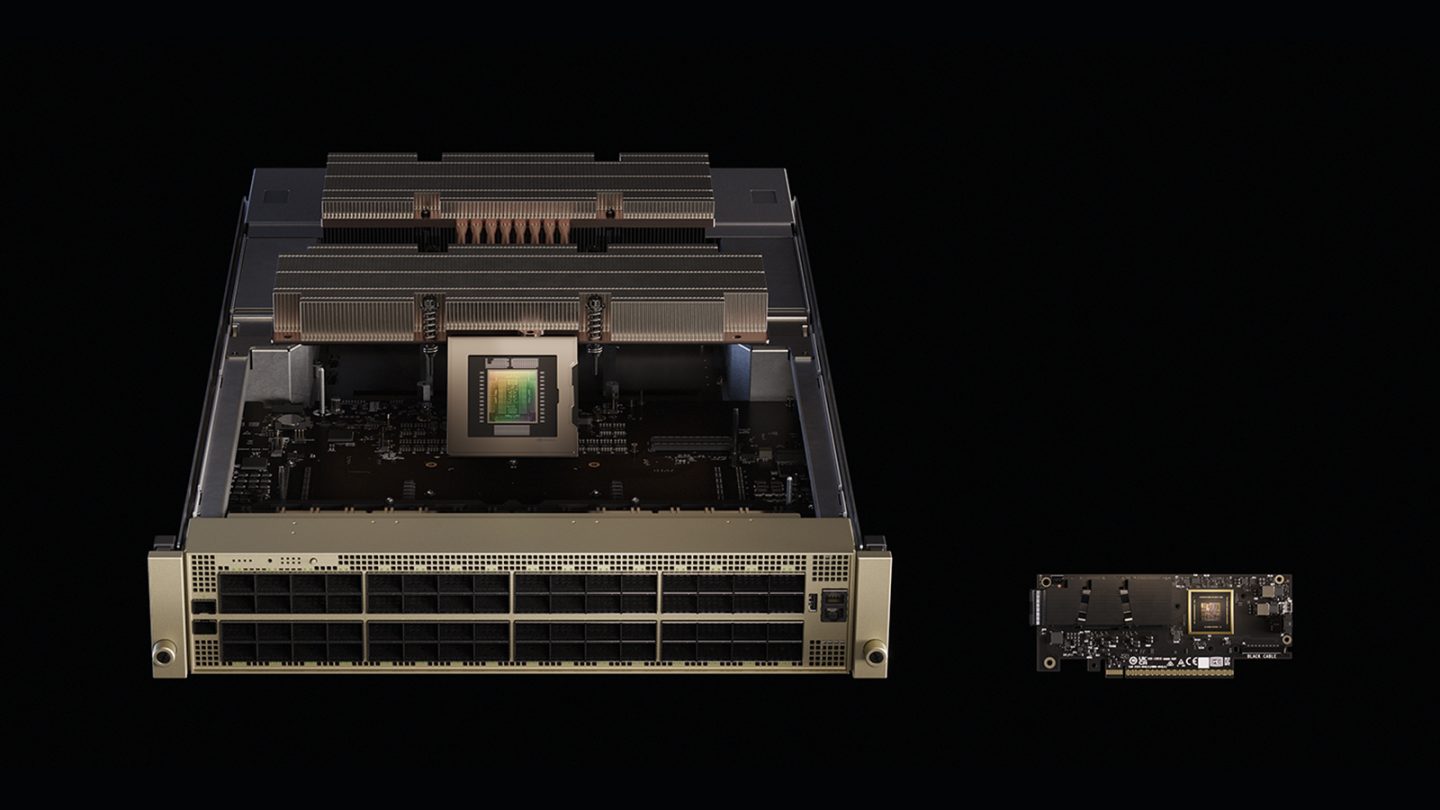

Joe DeLaere, who leads NVIDIA’s Accelerated Computing Solution Portfolio for Data Centers, highlighted the need for flexibility as data centers become more detailed. NVIDIA’s MGX system features a modular design that allows partners to mix and match various CPUs, GPUs, storage, and networking elements as necessary.

Interoperability and Future Readiness

This system also champions interoperability, enabling organizations to apply the same design principles across various hardware generations. DeLaere stated, “It provides flexibility, a quicker time to market, and future readiness.” As AI models expand, achieving power efficiency in data centers has become a significant challenge.

Strategies for Increased Power Efficiency

NVIDIA is focusing on improving energy utilization and scalability by collaborating closely with vendors specializing in power and cooling solutions. For instance, the transition to 800-volt DC power delivery minimizes heat loss and boosts efficiency. They’re also implementing power-smoothing technologies to reduce electrical spikes, cutting maximum power requirements by as much as 30% and allowing for increased computational capacity. You might also enjoy our guide on Why Privacy Coins Often Appear in Post-Hack Fund Flows.

Scaling AI Data Centers

NVIDIA’s MGX system plays a critical role in scaling data centers. Gilad Shainer, NVIDIA’s senior vice president of networking, explained that MGX racks are capable of hosting both computing and switching components, facilitating NVLink for scale-up connections and Spectrum-X Ethernet for expansive scale-out development. (CoinDesk)

Unified Systems for Distributed AI Training

On top of that, MGX can integrate multiple AI data centers into a cohesive system, which is necessary for organizations like Meta that need to support extensive distributed AI training. Depending on the required distance, these sites can connect through dark fiber or additional MGX switches, ensuring high-speed connections across different regions.

The Importance of Open Networking

Meta’s use of Spectrum-X reflects the growing significance of open networking solutions. Although FBOSS will serve as their primary network operating system, Spectrum-X can support various others, such as Cumulus, SONiC, and Cisco’s NOS, through strategic partnerships. This adaptability allows businesses to standardize their infrastructures using the systems that suit them best.

Enhancing the AI Ecosystem

NVIDIA views Spectrum-X as a means to enhance AI infrastructure, making it more efficient and accessible across different scales. Shainer remarked that the Ethernet platform was specifically designed for AI tasks like training and inference, boasting up to 95% effective bandwidth, significantly surpassing traditional Ethernet, which typically achieves around 60% due to flow collisions.

Looking Ahead: Vera Rubin Architecture

DeLaere mentioned that NVIDIA’s upcoming Vera Rubin architecture is expected to be commercially available in late 2026, with the Rubin CPX product scheduled for release by the year’s end. Both will function alongside Spectrum-X networking and the MGX systems to support the next generation of AI factories.

Collaborative Power Solutions

To facilitate the transition to 800-volt DC power, NVIDIA is teaming up with partners across the spectrum, from chip manufacturers like Onsemi and Infineon to data center design experts like Schneider Electric and Siemens. A detailed white paper on this methodology will be presented at the OCP Summit.

Performance Benefits for Hyperscalers

Spectrum-X Ethernet was specifically engineered for distributed computing and AI workloads. Shainer highlighted its adaptive routing and telemetry-based congestion management, which eliminate network hotspots and maintain stable performance. These attributes enable faster training and inference speeds while accommodating multiple workloads without interference. For more tips, check out Introducing FLUX.2 [klein]: Innovative Image Models for Visu.

Synergy of Hardware and Software

While NVIDIA often emphasizes hardware, DeLaere noted that software optimization is equally critical. The company is continually working to enhance performance through co-design, aligning hardware and software development to achieve maximum efficiency for AI systems. (Bitcoin.org)

Networking Solutions for the Future

The Spectrum-X platform represents NVIDIA’s first Ethernet solution purpose-built for AI tasks, effectively linking millions of GPUs while sustaining predictable performance across data centers. With congestion-control technology achieving up to 95% data throughput, Spectrum-X marks a significant advancement over conventional Ethernet.

Conclusion: A Unified Vision for AI

By integrating NVIDIA’s detailed stack—including GPUs, CPUs, NVLink, and software—Spectrum-X delivers the reliable performance necessary to support trillion-parameter models and the next generation of generative AI workloads.

FAQs

what’s Spectrum-X?

Spectrum-X is an Ethernet networking solution developed by NVIDIA, designed specifically for AI workloads, enabling efficient connectivity among multiple GPUs.

How does Spectrum-X improve AI data centers?

Spectrum-X enhances AI data centers by providing higher bandwidth, reducing latency, and enabling better scalability for expansive AI systems.

Who are the major companies working with Spectrum-X?

Major companies like Meta and Oracle are adopting Spectrum-X to enhance their AI data infrastructures.

what’s the anticipated impact of Vera Rubin architecture?

The Vera Rubin architecture is expected to significantly boost AI processing capabilities, supporting large-scale AI factories by the end of 2026.

Why is open networking important for AI?

Open networking allows organizations to use various network operating systems and hardware configurations, providing flexibility and efficiency in managing AI workloads.

Similar Articles: Oracle and NVIDIA’s Game-Changing AI Collaboration