Nvidia’s Shift: A New Era in AI Hardware

Nvidia’s Strategic Move in AI Hardware

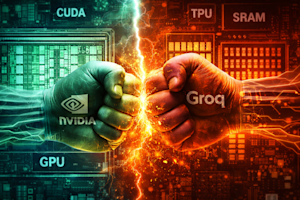

Nvidia’s recent $20 billion licensing agreement with Groq signals a significant shift in the AI hardware world. This partnership highlights the end of the era where one type of GPU was sufficient for all AI tasks. Instead, we’re entering an age where AI inference architectures are diversifying, driven by the need for both extensive context and real-time reasoning.

Understanding the Shift in AI Inference

To grasp why Nvidia’s CEO Jensen Huang is investing heavily in licensing agreements, we need to consider the competitive world. As of late 2025, the revenue generated from inference—where AI models actually execute—has surpassed that from training these models, marking a important moment in the industry. According to Deloitte, this shift means that accuracy is no longer the only metric; now latency and the ability to maintain context in autonomous systems are critical.

The Fragmentation of AI Workloads

The industry is now facing a fragmentation of inference workloads that traditional GPUs can’t keep up with. Nvidia’s licensing deal with Groq is a response to this challenge, indicating that the GPU market is evolving to cater to specialized tasks.

Disaggregated Architecture: Prefill vs. Decode

- Prefill Phase: This is where the model consumes and processes a huge volume of data—everything from long codebases to extensive video content. It’s a phase heavily reliant on computation and benefits from Nvidia’s strong legacy in matrix operations.

- Decode Phase: Here, the model generates outputs one token at a time, which requires quick data movement. If the system can’t transfer data fast enough, performance dips significantly. This is a weakness for Nvidia, where Groq’s specialized silicon shines.

Nvidia’s Upcoming Innovations

Nvidia is preparing its Vera Rubin family of chips to tackle this architectural split. The Rubin CPX will be optimized for the prefill work, capable of handling context windows exceeding one million tokens. To manage costs and improve performance, Nvidia is shifting towards a more affordable memory solution, GDDR7, moving away from the costly high-bandwidth memory.

The Role of Groq in Nvidia’s Future

By integrating Groq’s specialized chips into its inference pipeline, Nvidia aims to counter threats from other architectures, maintaining its dominance in the software ecosystem with CUDA. This move is expected to nip in the bud potential competition from specialized AI chips, aside from a few major players like Google’s TPUs. (CoinDesk)

The Advantages of SRAM Technology

At the core of Groq’s offerings is SRAM, which offers significant advantages over traditional memory types. Unlike DRAM found in most computers, SRAM provides a low-energy solution for moving data over short distances, making it ideal for rapid, real-time processing. According to Michael Stewart, a venture fund partner, SRAM consumes far less energy compared to moving data from DRAM to the processor. You might also enjoy our guide on Redefining Corporate Finance: The Rise of Digital Asset Trea.

Market Segmentation and Potential

This shift towards SRAM indicates a new market segment focusing on smaller AI models with fewer parameters. Though these models might not cater to massive AI applications, they’re key for edge computing, low-latency demands, and mobile devices, essentially enhancing user experience while maintaining privacy.

The Emergence of Portable AI Solutions

An important factor in Nvidia’s strategic maneuvers is the success of companies like Anthropic, which have developed portable AI stacks. This software layer allows AI models to function across various accelerator platforms, including both Nvidia and Google’s systems. With Anthropic’s recent commitment to work with Google’s TPUs extensively, Nvidia recognizes the need to improve its offerings and retain customers in a competitive marketplace.

Future Challenges: Memory and Statefulness

The recent acquisition of Manus by Meta underscores the importance of maintaining ‘state’ in AI agents. If an AI system can’t remember previous actions, its utility for tasks like market analysis or software development diminishes greatly. The KV Cache concept plays a vital role here, functioning as a model’s short-term memory during the prefill phase.

Nvidia’s Position in an Evolving Market

With these developments, Nvidia isn’t just reacting to the competitive scene; it’s actively redefining its role in an increasingly specialized and fragmented market. By embracing diverse technologies and building partnerships, the company aims to stay ahead in a rapidly changing industry. (Bitcoin.org)

Conclusion

Nvidia’s licensing deal with Groq is a clear signal of the shifts happening within the AI hardware world. As workloads become more specialized, the need for tailored solutions is more pressing than ever. The future of AI lies in adaptable architectures that can handle the demands of complex AI applications, and Nvidia is positioning itself to lead this innovation. For more tips, check out Buzzlamic Jihad Joins Aptos: A New Era for the Crypto Networ.

FAQs

What does Nvidia’s deal with Groq mean for AI hardware?

The partnership indicates a shift towards specialized architectures for AI inference, moving away from a one-size-fits-all GPU approach.

Why is SRAM important for AI processing?

SRAM allows for faster data movement with less energy consumption, making it ideal for real-time AI applications.

How is the market for AI chips evolving?

The market is fragmenting, with a growing focus on customized solutions for specific workloads rather than generic GPU solutions.

What role does the KV Cache play in AI models?

The KV Cache serves as a short-term memory for AI models, allowing them to retain context during processing.

How are companies like Anthropic influencing the AI space?

Anthropic’s development of portable AI stacks enables models to run across multiple platforms, increasing competition and innovation in the market.