Creating a Dynamic AI Architecture with LangGraph and OpenAI

Introduction to Advanced AI Systems

In this guide, we’re diving into how to construct a sophisticated AI architecture using LangGraph and OpenAI technologies. We’ll explore how to implement adaptive deliberation, enabling the AI to switch between rapid and in-depth reasoning. And, we’ll discuss developing a memory graph that organizes knowledge and easily connects related experiences. Finally, we’ll demonstrate a tool-use system that enforces operational constraints during execution.

Understanding the Components of Our AI System

To create a truly agentic AI, we need to integrate various components, including:

- Structured State Management: This allows the AI to control its internal state effectively.

- Memory-Aware Retrieval: This feature enables the AI to access past experiences efficiently.

- Reflexive Learning: This allows the AI to learn from its interactions and improve over time.

- Controlled Tool Invocation: This mechanism ensures that the AI uses its tools appropriately and within set boundaries.

By combining these components, our AI system becomes more capable of adapting to various scenarios, enhancing its overall performance. Each of these elements plays a key role in ensuring that the AI can operate effectively, learning from its environment while adhering to predefined constraints. This integration isn’t merely about adding functionalities; it’s about creating a cohesive system that can reason, remember, and act in a manner akin to human-like intelligence.

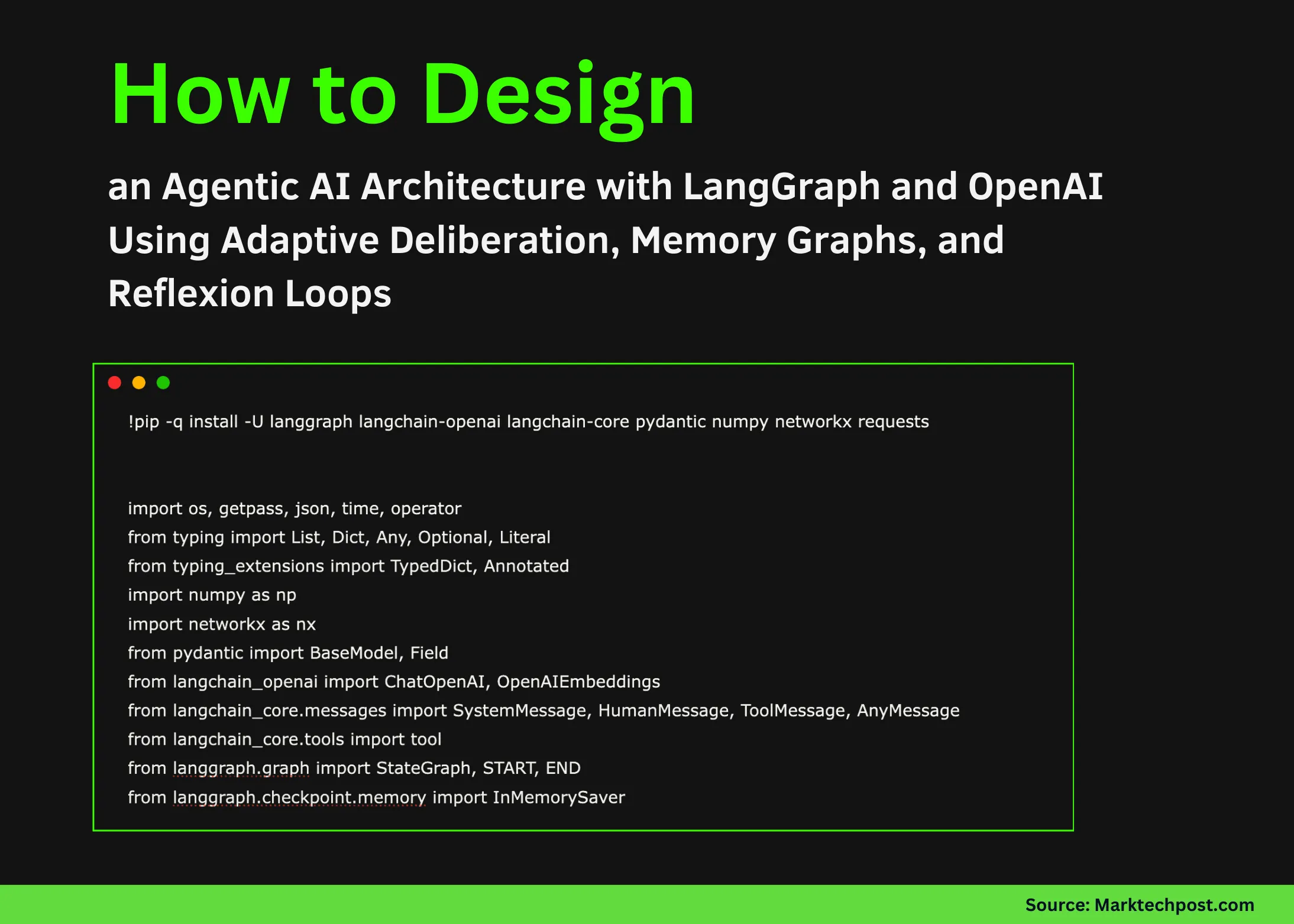

Setting Up the Environment

Before we start building, we need to prepare our environment by installing necessary libraries. Here’s a quick command to get started:

!pip install langgraph langchain-openai langchain-core pydantic numpy networkx requestsNext, we import important modules to orchestrate our AI architecture:

import os

import json

import numpy as np

import networkx as nx

from langchain_openai import ChatOpenAI, OpenAIEmbeddings

from langchain_core.messages import SystemMessage, HumanMessage, ToolMessage

from langgraph.graph import StateGraphLoading the OpenAI API Key

It’s critical to securely load your OpenAI API key. Here’s how you can do that:

if not os.environ.get("OPENAI_API_KEY"):

os.environ["OPENAI_API_KEY"] = getpass.getpass("Enter your OpenAI API Key:")Building the Memory Graph

Inspired by the Zettelkasten method, our memory graph stores individual notes as atomic units of knowledge. Each note can be linked to others based on semantic similarity. Here’s an outline of our memory graph class: You might also enjoy our guide on Mastering Mobile Trading with MetaTrader 4 for Forex and Bey.

class MemoryGraph:

def __init__(self):

self.g = nx.Graph()

self.note_vectors = {}

In this class, we’ll manage connections between notes and evaluate their similarity: (CoinDesk)

def add_note(self, note, vec):

self.g.add_node(note.note_id, **note.model_dump())

self.note_vectors[note.note_id] = vecThis structure not only supports efficient storage but also allows for dynamic connections between notes, which can lead to richer insights and knowledge retrieval. By tapping into the semantic relationships between notes, the AI can better understand context and relevance, improving its responses and decision-making capabilities.

Integrating External Tools

Our AI can build on external tools to enhance its functionality. For example, we can create a function to fetch data from the web:

@tool

def web_get(url: str) -> str:

import urllib.request

with urllib.request.urlopen(url, timeout=15) as r:

return r.read(25000).decode("utf-8", errors="ignore")We’ll also define a tool for memory retrieval:

@tool

def memory_search(query: str, k: int = 5) -> str:

qv = np.array(emb.embed_query(query))

hits = MEM.topk_related(qv, k)

return json.dumps(hits, ensure_ascii=False)These tools not only expand the capabilities of our AI but also ensure that it can operate effectively in real-world scenarios where information is constantly changing. By integrating web data retrieval and memory search, the AI can maintain a relevant and updated knowledge base, which is necessary for reliable performance.

Structuring Decision-Making Processes

Our AI system needs to make informed decisions. We’ll set up structured schemas for its internal representation:

class DeliberationDecision(BaseModel):

mode: Literal["fast", "deep"]

reason: str

suggested_steps: List[str]These classes help the AI define its operational goals and constraints clearly, guiding its execution. By having a structured approach to decision-making, the AI can ensure that its actions are aligned with its objectives, whether it’s acting quickly on low-stakes decisions or engaging in more thorough analysis for complex scenarios. For more tips, check out Investing in BlockDAG: Why Experts Are Excited About Its Pre.

Implementing the Agent’s Decision-Making

The heart of our AI lies in its ability to deliberate on actions based on its internal state. Here’s how we can execute its decisions: (Bitcoin.org)

def deliberate(st):

spec = RunSpec.model_validate(st["run_spec"])

d = llm_fast.with_structured_output(DeliberationDecision).invoke([

SystemMessage(content="Decide fast vs deep."),

HumanMessage(content=json.dumps(spec.model_dump()))

])

return {"decision": d.model_dump(), "budget_calls_remaining": st["budget_calls_remaining"] - 1}This process of deliberation not only helps the AI in making decisions but also ensures that it does so in a manner that’s transparent and justifiable. By maintaining a record of its reasoning, the AI can provide explanations for its actions, which is important for trust and accountability in AI systems.

Finalizing and Reflecting on Learnings

After executing various tasks, our AI should reflect on its performance to improve future outcomes. This reflection process can be formalized as follows:

def reflect(st):

r = llm_reflect.with_structured_output(Reflection).invoke([...])Reflection allows the AI to analyze what strategies worked, what didn’t, and how it can adjust its approach moving forward. This continuous learning loop is vital for the AI’s development, ensuring it becomes increasingly adept at navigating complex tasks and environments.

Conclusion

Creating a dynamic AI architecture using LangGraph and OpenAI isn’t only fascinating but also incredibly powerful. By implementing features like adaptive deliberation and memory graphs, we can develop an AI that learns and evolves over time. For the complete code and implementation details, feel free to check out the full codes.

Frequently Asked Questions

what’s LangGraph?

LangGraph is a powerful library designed to facilitate the construction and orchestration of AI models, providing tools for managing state and memory effectively.

How does adaptive deliberation work?

Adaptive deliberation allows the AI to choose between fast and deep reasoning strategies based on the context of the task, enhancing its decision-making efficiency.

what’s the Zettelkasten method?

The Zettelkasten method is a note-taking system that promotes long-term knowledge retention and connections between ideas, making it suitable for constructing memory graphs in AI.

Why is reflection important in AI?

Reflection helps AI systems learn from past experiences, allowing them to improve their performance and adapt their strategies over time.

Where can I find the full code for this project?

You can access the complete code and resources for this project on GitHub at this link.