How to Build a Self-Organizing Agent Memory System for Long-Term AI Reasoning

If you want an AI agent that can reason over weeks or months (not just a single chat), you need a self-organizing memory system: one component that extracts and structures knowledge into durable “memory units,” plus a separate reasoning component that queries those units on demand. In practice, you’ll store structured facts, decisions, and summaries in a database (like SQLite), group them into scenes or topics, and periodically consolidate them so your agent doesn’t drown in raw logs. In this post, I’ll show you how to build that system and why it matters for crypto and blockchain workflows.

Why crypto agents need long-term memory (and why raw chat logs won’t cut it)

Crypto and blockchain work moves fast, yet the context you need is long-lived. You might be tracking a protocol’s governance proposals, monitoring validator performance, following a DeFi position’s risk profile, or maintaining an audit trail for compliance. Meanwhile, your agent can’t rely on “whatever was said earlier” because the conversation window is limited, and even large context windows get expensive and messy.

So, if you’re building an AI assistant for on-chain research, trading ops, DAO governance, or smart contract security, you’ve probably run into the same problem I’ve: the agent either forgets important decisions or repeats the same questions. Worse, it may hallucinate continuity because it “feels” like it remembers. That’s why a self-organizing memory system matters. Instead of storing everything, you store what’s meaningful.

Here’s the key shift: we don’t treat memory as a giant transcript. Instead, we treat memory as a set of structured knowledge units—facts, preferences, commitments, hypotheses, and outcomes—each with metadata and links to related units. Then, we let the agent retrieve only what it needs for the current task. As a result, your agent stays coherent over long horizons without depending on opaque vector-only retrieval.

To ground this in authoritative references, it helps to remember that blockchains themselves are append-only ledgers optimized for verification, not for semantic recall. That’s why we’ll use a local database for semantic memory, and we’ll treat on-chain data as an external source of truth. If you want a refresher on the underlying chain architecture, the Ethereum developer documentation is a solid reference.

What “self-organizing memory” actually means

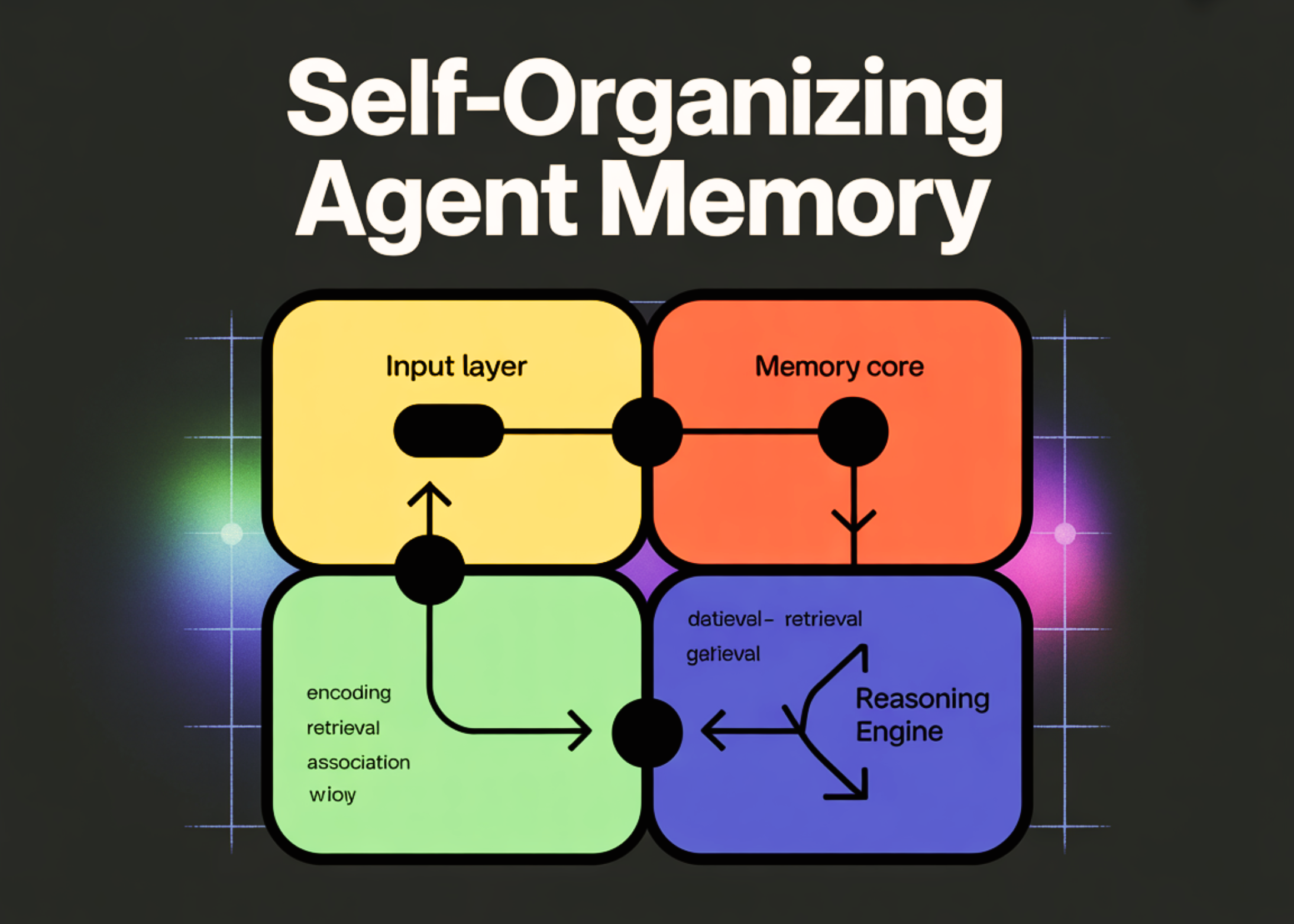

When I say “self-organizing,” I mean the system regularly reshapes its own stored knowledge. It doesn’t just append new entries. Instead, it:

- Extracts salient information from interactions

- Normalizes it into structured fields (who/what/when/why)

- Groups related items into “scenes” (projects, incidents, positions, proposals)

- Consolidates duplicates and resolves contradictions

- Produces rolling summaries that stay small and useful

Because of that, you can ask your agent, “What did we decide about the validator slashing risk last month?” and it won’t guess. It will retrieve the decision record, the rationale, and the follow-up tasks.

Why separate reasoning from memory management

If your main agent both chats and manages memory, it’ll get distracted, and it won’t be consistent. So we split responsibilities:

- Main agent: answers the user, plans tasks, and calls tools

- Memory manager: extracts knowledge, updates the database, and consolidates summaries

That separation is especially valuable in crypto, because your agent may need to be conservative and auditable. For example, if it recommends a treasury rebalancing, you’ll want to trace what it “knew” and when it learned it.

Designing memory units for blockchain workflows (facts, decisions, and scenes)

Before we write code, we need the right data model. If you store vague “notes,” you’ll end up with a second transcript. Instead, we’ll store memory units with explicit types and fields. Also, we’ll keep them small so consolidation stays cheap.

I like to start with five unit types, then expand only when I must:

- Fact: a stable claim (e.g., “Protocol X uses a 7-day timelock”)

- Preference: user or org preference (e.g., “We don’t bridge via Y”)

- Decision: a committed choice plus rationale (e.g., “We’ll vote YES on Proposal 42 because…”)

- Task: an actionable item with status (e.g., “Rotate RPC keys by Friday”)

- Summary: a compressed view of a scene or time window

Then we add scenes. A scene is a durable container for related units: “Arbitrum validator ops,” “DAO governance Q1,” “Audit of Contract Z,” or “ETH staking strategy.” Scenes are the backbone of long-term reasoning because they let you retrieve context without searching the entire memory store.

A practical schema (SQLite) that stays simple

SQLite works well because it’s local, portable, and transparent. Plus, you can inspect it with standard tools, which you can’t always do with proprietary memory layers. If you’re unfamiliar with SQLite, the official docs are here: https://www.sqlite.org/index.html.

Here’s a schema that’s small but powerful:

- scenes(id, name, description, created_at, updated_at)

- memory_units(id, scene_id, unit_type, content_json, confidence, source, created_at, updated_at)

- links(from_unit_id, to_unit_id, relation)

- raw_events(id, scene_id, role, text, created_at)

Notice what we did: we keep raw events for auditability, but we don’t rely on them for recall. Instead, the agent queries memory_units and summaries. That’s how you avoid “vector soup” while still keeping provenance.

Scene-based grouping for crypto: examples you can steal

If you’re not sure how to define scenes, use the same boundaries you already use in crypto operations:

- Per protocol: “Uniswap v4 research,” “Lido risk review”

- Per chain: “Base ecosystem watch,” “Solana validator ops”

- Per portfolio: “Treasury stablecoin ladder,” “LP positions”

- Per incident: “Bridge exploit response,” “Oracle outage postmortem”

- Per governance cycle: “DAO votes March–April”

As a result, retrieval becomes intuitive. You ask for the “Bridge exploit response” scene and get the facts, the timeline, and what you decided—without dragging in unrelated chatter.

Implementation: a two-agent loop that extracts, consolidates, and retrieves

Now we’ll turn the design into a working system. You already saw a snippet that uses Python, SQLite, and an LLM call. We’ll keep that vibe, but we’ll push it into a clean architecture: (1) capture events, (2) extract memory units, (3) consolidate, and (4) retrieve for reasoning.

Although you can use many models, I’ll keep the interface generic. If you’re using OpenAI’s API, you can reference the platform docs here: https://platform.openai.com/docs/overview. Also, if you’re building crypto tooling that touches private keys, don’t pipe secrets into any model. Instead, store secrets in a vault and pass only identifiers.

Step 1: store raw events (but don’t rely on them for recall)

Each time the user or agent says something, we store it as a raw event tied to a scene. That gives you traceability, and it helps with later audits. However, we’ll keep raw events out of the main reasoning prompt most of the time.

Minimal event fields:

- scene_id

- role (user/assistant/tool)

- text

- timestamp

Then, on a schedule (or after each turn), we run the memory manager to extract durable units.

Step 2: extract structured memory units with a “memory manager” prompt

This is where self-organization begins. The memory manager reads the latest event(s) and outputs JSON that fits your schema. It should be strict and boring. In other words, it shouldn’t freestyle prose. It should produce clean objects like:

- unit_type: “decision”

- content_json: { “decision”: “…”, “rationale”: “…”, “constraints”: […], “deadline”: “…” }

- confidence: 0–1

- source: “conversation” or “tool:etherscan”

For crypto, I also recommend storing “assumptions” explicitly. That way, if an assumption breaks (e.g., an oracle changes), you can find every decision that depended on it.

Step 3: consolidate and summarize so memory doesn’t bloat

Extraction alone isn’t enough, because you’ll still accumulate duplicates. So, every N events (or once per day), run consolidation:

- Find overlapping facts in the same scene

- Merge them into a canonical fact

- Mark older ones as superseded

- Generate a rolling scene summary (100–300 words)

As a result, your agent stays fast. Also, your database stays readable, which matters when you’re debugging a trading incident at 3 a.m.

Step 4: retrieval that’s transparent (SQL + light semantics)

When the main agent needs context, it should ask for:

- The scene summary

- The top relevant decisions

- Open tasks

- High-confidence facts

You can do this with straightforward SQL filters, plus optional keyword search. If you want semantic search, you can add embeddings later, but you don’t have to start there. That’s the point: you can keep retrieval explainable.

Making it “crypto-native”: provenance, auditability, and on-chain references

In the blockchain niche, memory can’t be a black box. You’ll want to know where a claim came from, whether it’s still true, and what it affected. So, we’ll add three crypto-native patterns: provenance fields, on-chain anchors, and verification hooks.

Provenance fields you should store from day one

Every memory unit should carry:

- source: conversation, tool output, report link, on-chain query

- source_ref: URL, tx hash, block number, proposal ID

- created_at / updated_at: when the agent learned it

- confidence: your system’s belief level

That way, when your agent says “This vault has a 2-day cooldown,” you can click the reference or re-check the contract. For on-chain references, explorers are a practical baseline. For Ethereum, you can use Etherscan as a human-auditable source, even if your tools query nodes directly.

On-chain anchors: store tx hashes and proposal IDs as “facts with receipts”

Whenever the agent observes something on-chain—like a governance vote, a contract upgrade, or a treasury transfer—store it as a fact with a receipt:

- “Treasury transferred 250 ETH to multisig B on 2026-02-01”

- source_ref: tx hash

- chain_id: 1

- block_number: …

As a result, your memory system becomes defensible. You won’t just “remember” that something happened—you’ll be able to prove it.

Verification hooks: don’t let stale memory mislead your agent

Crypto reality changes quickly. So, some memory units should expire or trigger re-verification. For example:

- APYs, interest rates, and liquidity conditions should have short TTLs

- Contract ownership and admin roles should be re-checked after upgrades

- Bridge statuses and risk flags should be re-validated daily

Therefore, add a field like valid_until or needs_review_at. Then, when the agent retrieves a fact past its review date, it should call a tool to verify it before using it in reasoning.

If you’re building around Ethereum standards, it also helps to know what “standard behavior” looks like. The Ethereum Improvement Proposals (EIPs) site is a good reference point for expected interfaces and upgrade patterns.

Operational tips: prompts, guardrails, and avoiding “memory poisoning”

Once you deploy this, you’ll notice a new class of problems: bad memory. Users can contradict themselves, tools can fail, and attackers can try to inject malicious instructions. So, we need guardrails.

Prompting rules that keep memory clean

For the memory manager, I use constraints like:

- Only store information that’s stable or decision-relevant

- Prefer explicit numbers, addresses, and identifiers

- Don’t store secrets (keys, seed phrases, private endpoints)

- When in doubt, store as an “assumption” with low confidence

Also, I keep the output format strict JSON. If it can’t comply, it should return an empty list. That’s important because you can’t afford half-parsed memory in production.

Handling contradictions without breaking your agent

Contradictions will happen. So, don’t overwrite facts silently. Instead:

- Create a new unit with the updated claim

- Link it to the old unit with relation “contradicts” or “supersedes”

- Ask the user to confirm when the conflict affects decisions

As a result, your agent stays honest. It won’t pretend the past didn’t happen, and you won’t lose the audit trail.

Preventing memory poisoning and instruction injection

If you let arbitrary user text become “system truth,” you’ll get burned. Therefore:

- Never store user instructions as “preferences” unless they’re confirmed

- Tag untrusted claims as “user_reported” with lower confidence

- Require receipts for on-chain claims (tx hash, block, contract call)

- Keep a denylist of sensitive patterns (seed phrases, private keys)

And, you should treat tool outputs as more reliable than user claims, but not infallible. Tools can be wrong, and RPCs can lie under certain threat models. So, for high-stakes actions, you can require multi-source verification.

Putting it all together: a realistic flow for a DAO governance agent

Let’s walk through a concrete crypto example so you can see how this feels end-to-end. Imagine you’re running a DAO governance agent that helps you track proposals and vote consistently.

Day 1: you discuss voting principles

You tell the agent: “We won’t support proposals that increase inflation without a burn mechanism.” The memory manager stores a preference unit in the “DAO governance” scene. It also stores a short summary: your governance philosophy and any exceptions.

Day 12: a new proposal drops

You paste the proposal link and ask, “Should we support this?” The main agent retrieves:

- Scene summary (your principles)

- Past decisions on similar proposals

- Open questions or tasks

Then it analyzes the proposal. If it needs verification, it calls tools (snapshot API, forum scrape, on-chain calls). After you decide, the memory manager stores a decision with rationale and a task to vote before the deadline.

Day 60: you ask for a quarterly review

You ask, “What patterns do you see in our votes?” The system consolidates decisions into a higher-level summary: categories of proposals, outcomes, and deviations from your principles. Because memory is structured, the agent doesn’t have to re-read two months of chat. Instead, it queries decision units and produces a clean report.

Why this beats “just use embeddings”

Embeddings can help, but they’re not enough on their own. They’re hard to audit, and they don’t naturally represent commitments, contradictions, or time-based validity. With structured memory, you can still add embeddings later for fuzzy recall, yet you won’t lose transparency. In crypto, that tradeoff matters.

FAQ

What’s the difference between agent memory and a normal database of notes?

A normal notes database is passive: you write and search. Agent memory is active: it extracts structured units automatically, links them to scenes, and consolidates them over time. Because of that, your agent can reason with the memory instead of just retrieving text blobs.

Do I need vector embeddings for long-term AI reasoning?

No, you don’t. You can get surprisingly far with scene summaries, structured units, and SQL queries. However, embeddings can be a helpful add-on for fuzzy matching and discovery, especially when your scene boundaries aren’t perfect.

How do I keep the system from storing sensitive crypto data like private keys?

You should implement hard filters before anything hits the database: detect key-like patterns, seed phrase formats, and secrets, then redact or block them. Also, instruct your memory manager never to store secrets. Even then, you can’t rely on prompts alone, so you need code-level enforcement.

How often should I run consolidation and summarization?

For most crypto agents, I’d consolidate on a schedule (daily) and also after major events (a vote, an incident, a trade). If your scenes are high-volume—like price monitoring—you may consolidate every N events. The goal is that summaries stay current and units don’t sprawl.

Can this memory system help with compliance and audit trails?

Yes. If you store provenance (source, timestamps, tx hashes, proposal IDs) and keep raw events, you’ll have a clear trail of what the agent saw, what it concluded, and what it recommended. That’s especially useful for treasury operations, governance, and incident response where you must justify actions later.